AI Voice Cloning Scam

How scammers use artificial intelligence to clone the voices of people you love - and use them to demand money in a fake emergency

AI voice cloning technology can generate a convincing replica of someone's voice from just a few seconds of audio - which is now widely available on social media. A call that sounds exactly like your child or grandchild may not be them at all.

In This Guide

- Overview of the Scam

- How the Scam Works

- Common Variations

- Example Scam Messages or Pop-Ups

- Warning Signs

- Who Scammers Often Target

- What the Scammer Is Trying to Achieve

- What To Do If You Encounter This Scam

- If You Already Paid or Shared Information

- How To Prevent AI Voice Cloning Scams

- Final Safety Advice

Overview of the Scam

AI voice cloning scams use artificial intelligence software to generate a synthetic replica of a real person's voice - typically a family member - and use that cloned voice to call a relative and stage a fake emergency. The call sounds exactly like your son, daughter, or grandchild because the AI was trained on audio taken directly from their social media videos, voicemails, or online content.

This technology has advanced dramatically in recent years. Tools that once required hours of audio training can now produce convincing voice clones from as little as a few seconds of recorded speech. The result is a call that bypasses one of the most instinctive forms of verification - recognizing a loved one's voice - and exploits the protective impulse that follows.

AI voice cloning scams are a newer and more sophisticated evolution of the grandparent scam. The underlying structure - a family member in crisis, a request for immediate payment, an instruction not to tell anyone - is the same. What has changed is the level of realism. A cloned voice removes the doubt that might otherwise make a victim pause, making these calls significantly more effective and more dangerous.

How the Scam Works

AI voice cloning scams combine technical deception with the same emotional pressure tactics used in traditional impersonation fraud.

- The scammer identifies a target - typically an older adult - and researches their family through social media. They identify a family member whose voice can be harvested from publicly available videos, reels, or clips posted online.

- Using AI voice synthesis software, the scammer generates a clone of that family member's voice. Even a short clip - 10 to 30 seconds - is sufficient for modern tools to produce a convincing replica that captures tone, cadence, and speech patterns.

- The scammer calls the target using the cloned voice to open the call, saying something like "Mom, it's me - I'm in trouble." The familiar voice creates immediate emotional engagement and bypasses initial skepticism.

- After a few lines establishing distress, a second person takes the call - someone claiming to be a lawyer, bail bondsman, or police officer. This person handles the financial request while the emotional weight of hearing the family member's voice lingers.

- The target is told to send money immediately via wire transfer, gift cards, or cash courier, and instructed not to call the family member back or tell anyone else what is happening. The money is transferred before the target has a chance to verify the call was fake.

Common Variations

AI voice cloning is used in several different scam scenarios beyond the family emergency call.

- Grandparent emergency version: The most common application. A grandparent receives a call in a cloned grandchild's voice claiming to be in legal trouble - arrested, in an accident, or detained - and needing immediate bail money.

- Parent emergency version: A parent receives a call in a cloned child's voice. This version is particularly alarming because parents of young adults are highly responsive to distress signals from their children.

- Kidnapping simulation: A more extreme version in which a cloned voice is used to simulate a kidnapping. A parent hears what sounds like their child in distress, followed by a "kidnapper" demanding a ransom. This scenario causes severe psychological harm even when no money is ultimately sent.

- Business executive fraud: A cloned voice of a CEO or senior executive is used to instruct an employee - typically in finance or accounting - to authorize an urgent wire transfer. This version targets businesses rather than families.

- Voicemail version: A cloned voice message is left rather than a live call, asking the recipient to call back a specific number. The callback connects to scammers who continue the script.

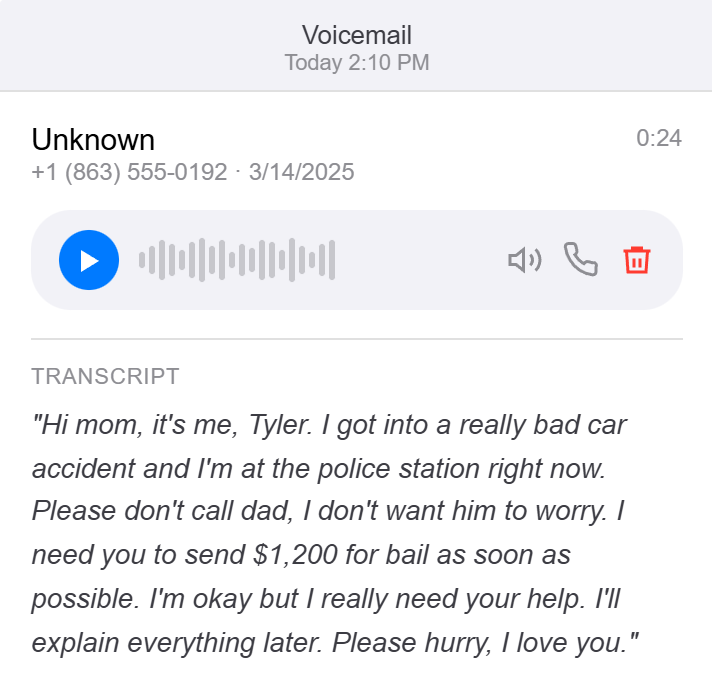

Example Scam Messages or Pop-Ups

The example below shows how an AI voice cloning scam call typically unfolds. The opening seconds of the call are the most critical - the cloned voice is used briefly to establish identity before the scenario is handed off to a human scammer.

The cloned voice is typically used only for the first 10 to 20 seconds of the call - enough to establish recognition and emotional engagement. After that, a live scammer takes over playing the role of an authority figure. The transition can happen very quickly and feel natural, especially when the recipient is already in an emotionally heightened state from hearing what they believe is a loved one in distress.

A typical call sounds like this: a voice that matches your family member says "Mom, I'm so sorry - I got in an accident and I'm in trouble, I need your help." Then a second voice - calmer, more authoritative - takes over: "This is [name], I'm your son's attorney. He has been detained and we need to arrange bail tonight. I need you to keep this between us and go purchase some gift cards right now." The emotional impact of the first voice makes everything that follows feel urgent and real.

Warning Signs

These signals should raise suspicion even when the voice on the call sounds completely familiar.

- The call comes from an unknown number, not from your family member's actual phone. A cloned voice call cannot come from your family member's real device.

- The voice sounds right but slightly off in small ways - unusual phrasing, a slightly different rhythm, or a quality that feels slightly synthesized. Current AI cloning is very good but not always perfect.

- The scenario involves an emergency requiring immediate financial help - arrest, accident, hospital - with no time to verify through other family members.

- You are told not to call your family member back or to tell anyone else in the family, explained as a legal restriction or out of embarrassment. This instruction is the scam's most important safeguard and should itself be treated as a red flag.

- A second person - a lawyer, officer, or bail agent - takes over the call and begins directing you toward a specific payment method and amount.

- Payment is requested via gift cards, wire transfer, or cash courier. These methods are irreversible and untraceable.

- When you ask a specific personal question that only the real family member would know, the voice hesitates, deflects, or the "lawyer" interrupts and redirects the conversation.

Who Scammers Often Target

AI voice cloning scams primarily target older adults who have family members active on social media. The more publicly available audio of a family member - videos, reels, TikToks, YouTube content - the easier it is for scammers to build a usable voice clone. Parents and grandparents of young adults who post regularly online are the most frequent targets.

People who are known to be close to their family members and responsive to distress signals are specifically sought out. Scammers research social media to identify family relationships, geographic separation - a grandchild in college in another state is an ideal target profile - and any personal details that make the emergency scenario more plausible.

What the Scammer Is Trying to Achieve

The goal is the same as in any grandparent or family emergency scam: immediate payment via an untraceable method. The AI voice component is not a new type of scam - it is an enhancement designed to make the existing scam more convincing and harder to dismiss.

The cloned voice removes the hesitation that might otherwise lead a careful person to pause. When you hear what you believe is your child's or grandchild's voice in distress, the instinct to help overrides analytical thinking almost completely. That is exactly what this technology is being used to exploit.

What To Do If You Encounter This Scam

If you receive a distress call that sounds like a family member, here is the most important sequence of steps.

- Stay calm. The urgency you are feeling is manufactured. Even if the voice sounds exactly right, take a breath before doing anything.

- Hang up and call your family member directly on their known number - not any number provided during the call. If they answer and are fine, the earlier call was a scam using a cloned voice.

- If you cannot reach them directly, call another family member who would know their whereabouts. Do not send any money until you have independently confirmed there is a real emergency through someone you can verify.

- Ask a question only the real person would know if you remain on the call. A cloned voice system or a scammer playing a role may not be able to answer correctly - and any hesitation or deflection is informative.

- Do not purchase gift cards, initiate wire transfers, or allow any courier to come to your home until the situation is verified. Real emergencies can withstand a 5-minute verification pause. Scams cannot.

- Report the call to the FTC at ReportFraud.ftc.gov and to your local FBI field office, as voice cloning fraud is actively investigated at the federal level.

If You Already Paid or Shared Information

If you sent money before realizing the call was fraudulent, act quickly.

- Call the gift card issuer immediately if you purchased gift cards. Have receipts and card numbers ready. Some balances can still be frozen if you act within minutes.

- Contact your bank right away if you initiated a wire transfer and ask about a wire recall. The sooner you act, the better the chance of partial recovery.

- Tell your family member whose voice was cloned what happened. They deserve to know their voice was used without their consent, and they may be able to take steps to reduce their public audio footprint.

- File a report with the FTC at ReportFraud.ftc.gov and with the FBI's Internet Crime Complaint Center at ic3.gov. Voice cloning fraud is a federal concern and reports contribute to active investigations.

- Do not feel ashamed. AI voice cloning is specifically designed to bypass the verification instincts that protect people. Hearing what you genuinely believe is your child or grandchild in distress is one of the most powerful emotional triggers that exists. Your response was human and understandable.

How To Prevent AI Voice Cloning Scams

A few specific habits make voice cloning scams significantly harder to execute successfully against you and your family.

- Establish a family code word. Agree with your family that in any real emergency, the person calling - or someone calling on their behalf - will use a specific word or phrase only your family knows. If a caller cannot provide it, the call is not verified regardless of how the voice sounds.

- Make it a rule to always call back on a known number before sending money in any emergency scenario, no matter how real the voice sounds. Announce this rule to your family so everyone is aligned.

- Talk to younger family members about their social media audio footprint. Publicly posted videos with clear, extended voice recordings make cloning easier. This is not about restricting their online presence - just understanding the exposure.

- Never act on financial instructions from an emergency call without independent verification. A real lawyer, bail bondsman, or hospital can wait five minutes while you make a confirming phone call.

- Be aware that caller ID can be spoofed. Even if a call appears to come from a family member's number, the voice on the line may not be them. A callback to their real number is still necessary to verify.

Final Safety Advice

AI voice cloning represents a genuine shift in the threat landscape for this type of fraud. For generations, hearing a loved one's voice was one of the most reliable forms of identity verification available to us. That assumption can no longer be made with full confidence. This is an uncomfortable reality, but awareness of it is now a meaningful form of protection.

The response that stops this scam - hanging up and calling the family member on their known number - is simple and takes less than a minute. No real emergency will be made worse by that verification step. If your family member is truly in trouble, you will find out immediately and can respond from a position of certainty. If the call was fraud, you will have protected yourself from a potentially significant loss.

Share this information with the people around you, especially older family members. The family code word is a practical tool that costs nothing and provides meaningful protection. Having that conversation before you need it is far easier than navigating the situation in the moment.